Good prospects:

Latest Regulatory Filings for SP5

Companies with the best and the worst fundamentals.

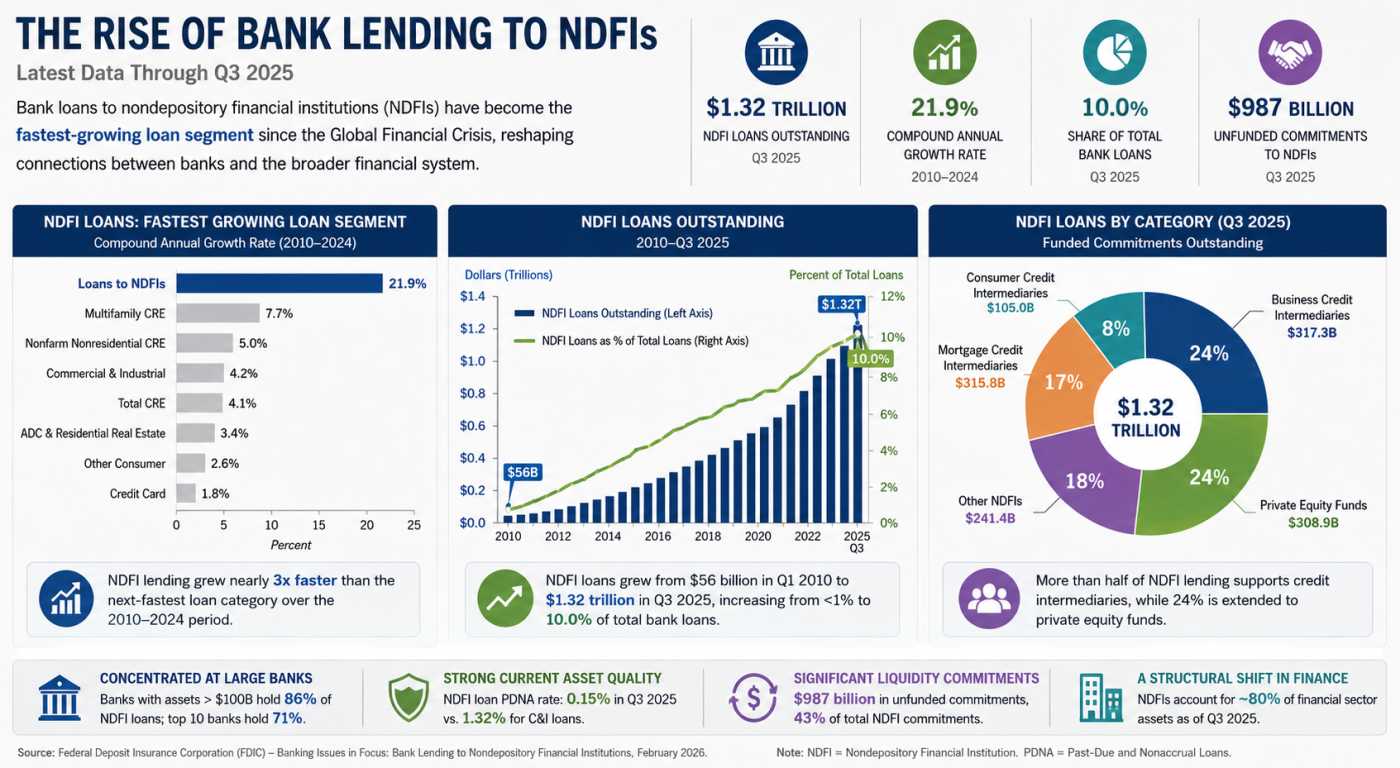

Private Credit’s Secret Banking Backbone Is Growing Faster Than Anyone Expected

America's $5 Trillion Business Handoff Has Already Begun

The Repair Economy Boom in Rural America

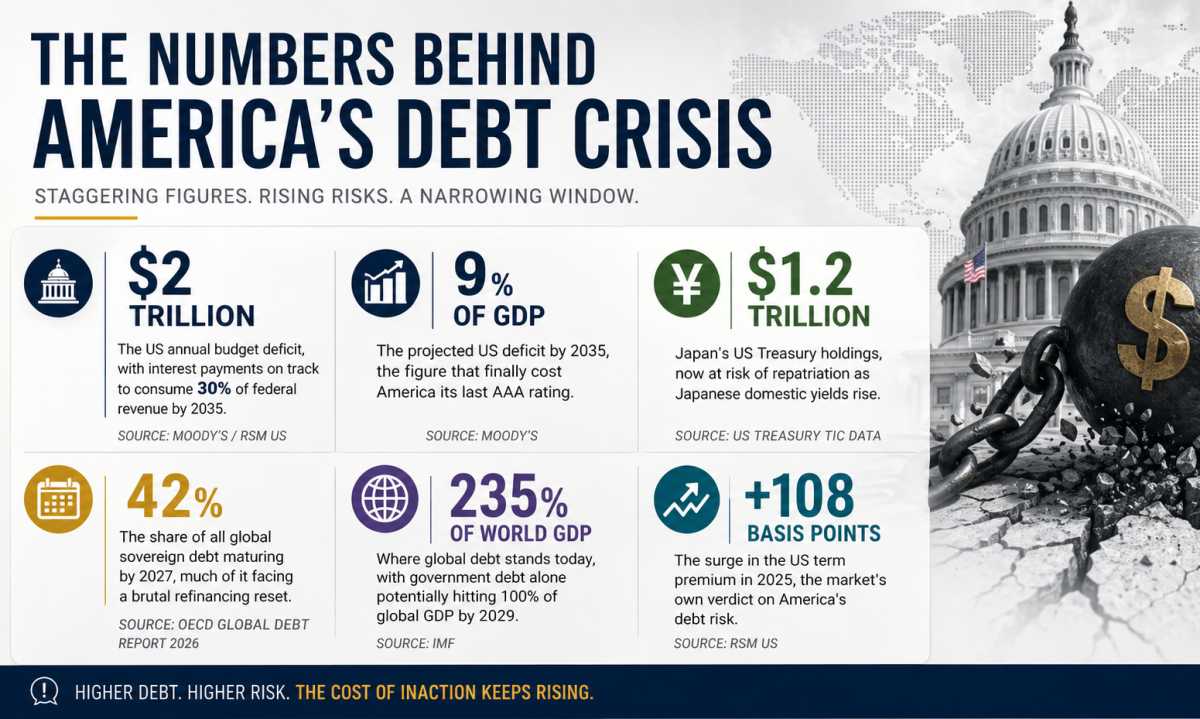

Debt, Deficits & Disaster: The Bond Market Crisis

Not Wall Street, But AI: The Real Force Democratizing Finance Across America

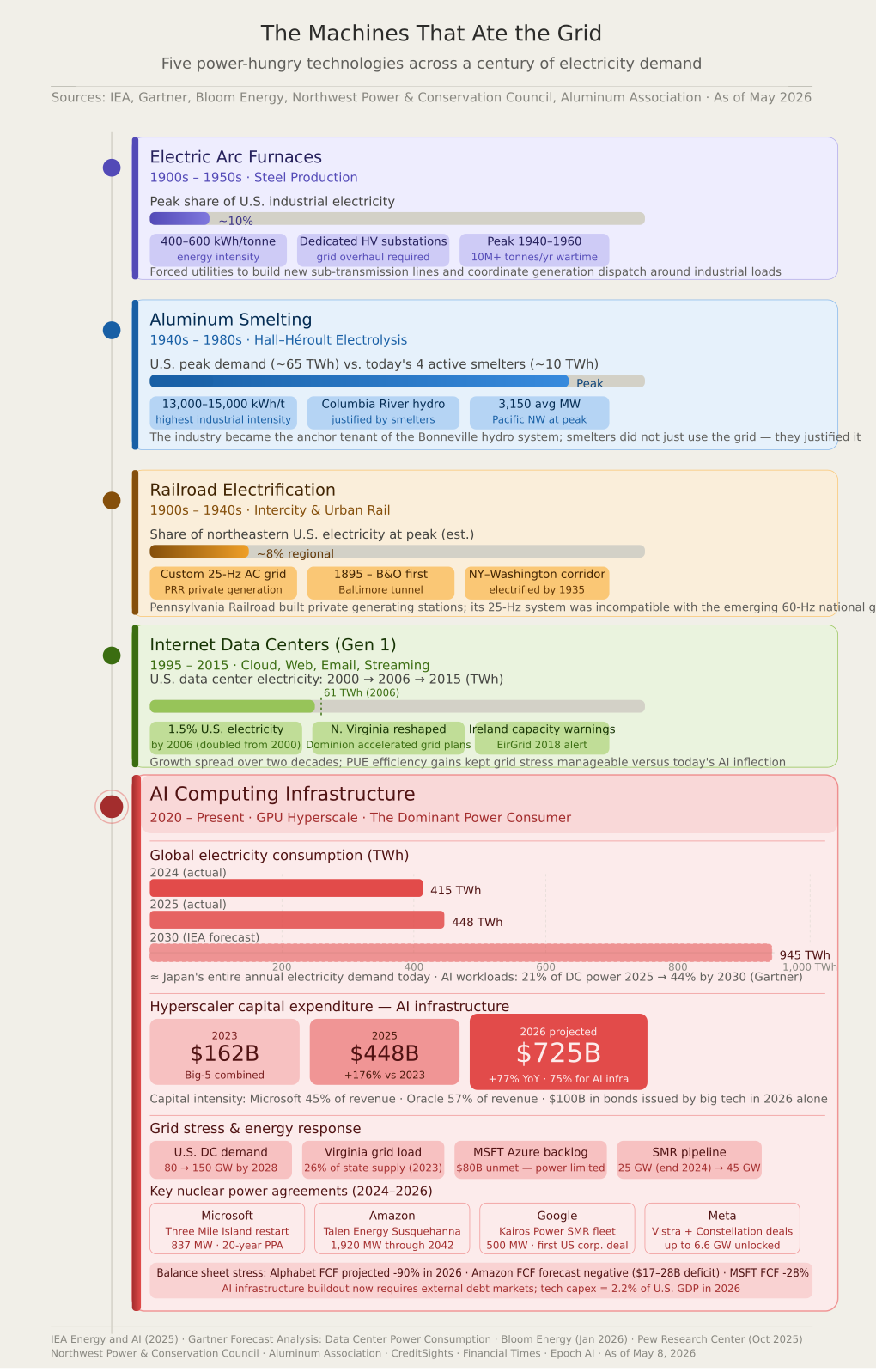

The Machines That Ate the Grid: Five Centuries of Power Hunger

1. Electric Arc Furnaces and the Steel Boom (1900s–1950s)

When electric arc furnaces (EAFs) first emerged at the turn of the twentieth century, they represented the most electricity-hungry industrial technology yet devised. By the mid-1940s, steelmakers in the United States and Europe were operating EAFs that consumed between 400 and 600 kilowatt-hours (kWh) per tonne of steel produced. During the Second World War, when Allied armaments production peaked, U.S. electric furnace steel output climbed to over 10 million tonnes annually, demanding dedicated power supply arrangements that strained municipal grids. Utilities were compelled to build new sub-transmission lines, erect dedicated high-voltage substations, and coordinate generation dispatch specifically around the intermittent but enormous loads of these facilities. The EAF's peak impact on grid infrastructure occurred roughly between 1940 and 1960, when the technology accounted for an estimated 8 to 12 percent of total U.S. industrial electricity consumption. The lesson from the steel era was that transformative industrial loads do not simply attach to existing grids they reshape them from the ground up.

2. Aluminum Smelting and the Hydropower Age (1940s–1980s)

If steel's electric appetite was formidable, aluminum smelting's was almost incomprehensible for its era. The Hall–Héroult electrolytic process, which remains the industry standard today, requires approximately 13,000 to 15,000 kWh to produce a single tonne of primary aluminum. At peak U.S. production in the 1970s, domestic smelters consumed an estimated 60 to 70 TWh annually equivalent at the time to the combined electricity demand of several mid-sized states. In the Pacific Northwest alone, the aluminum industry grew to consume 3,150 average megawatts continuously, sufficient to power three cities the size of present-day Seattle for a year, according to the Northwest Power and Conservation Council.

The grid consequences were equally dramatic. The aluminum industry became the foundational anchor tenant of the Columbia River hydroelectric system. Bonneville Dam came online in 1938 partly because the Pacific Northwest was hungry for large industrial customers capable of absorbing its baseload generation. Smelters did not merely use the grid they justified the construction of some of its most significant infrastructure. By the late 1990s, rising electricity costs, global competition, and deregulated power markets had shuttered most of these facilities. The Aluminum Association now reports only four primary smelters remain operational in the United States, producing 683,500 tonnes annually with a combined electricity demand of roughly 10 TWh a shadow of the industry's former dominance.

3. Railroad Electrification and the Urban Grid (1900s–1940s)

The electrification of urban and intercity rail networks between roughly 1895 and 1940 represented one of the first instances where a single transportation technology fundamentally overhauled civic electrical infrastructure. The Baltimore and Ohio Railroad's 1895 installation in the Howard Street Tunnel is widely regarded as the first heavy-duty railroad electrification in the United States. By the 1930s, major railroads including the Pennsylvania Railroad had electrified hundreds of route-miles of the northeastern corridor, while hundreds of urban transit systems operated on dedicated third-rail or overhead catenary systems.

The Pennsylvania Railroad's electrification of its New York–Washington main line, completed in 1935, required one of the largest private infrastructure investments of the Depression era. The railroad built its own dedicated generating stations, erected thousands of miles of transmission and distribution infrastructure, and engineered custom 25-Hz alternating current systems incompatible with the emerging 60-Hz national grid standard. At peak in the late 1930s and 1940s, railroad electrification accounted for a meaningful share of electricity demand in several northeastern states. The transition away from electrified freight rail in the 1950s and 1960s, driven by the economic advantages of diesel, was itself a testimony to how electricity-dependent the rail network had become and how rapidly grid dependency could be dismantled when a competing technology proved superior.

4. The Internet Data Center Revolution (1995–2015)

Long before artificial intelligence entered the energy discourse, the first generation of Internet-era data centers quietly transformed electricity demand patterns across the developed world. Between 1995 and 2010, the proliferation of web servers, email infrastructure, early cloud computing, and streaming media drove data center electricity consumption from near-negligible levels to a globally significant load. The United States Department of Energy, in a landmark 2007 report by Lawrence Berkeley National Laboratory, estimated that U.S. data centers consumed approximately 61 TWh in 2006, or about 1.5 percent of total U.S. electricity consumption at the time having doubled from 2000 levels.

The grid impact during this period was geographically concentrated. Northern Virginia's Loudoun County, already dubbed "Data Center Alley," began transforming regional load profiles so substantially that Dominion Virginia Power was compelled to accelerate transmission expansion programmes years ahead of normal planning cycles. Ireland, which aggressively courted data center investment, saw its grid operator EirGrid begin issuing capacity warnings about data center load growth as early as 2018. The generation and transmission buildout required during this phase was substantial but manageable, in part because the load growth was spread over two decades and because Power Usage Effectiveness (PUE) improvements the ratio of total facility power to IT equipment power steadily reduced energy waste per unit of computation.

5. Artificial Intelligence Computing: The Current and Dominant Power Inflection (2020–Present)

No technology in the history of the electrical grid has escalated its electricity demands with the speed or scale of artificial intelligence computing infrastructure. What the steel, aluminum, and railroad industries achieved over decades, AI data centers have replicated in years and the trajectory remains steeply upward.

The Scale of AI's Power Appetite

According to the International Energy Agency's landmark 2025 report, Energy and AI, global data center electricity consumption stood at approximately 415 TWh in 2024, representing about 1.5 percent of global electricity demand, having grown at 12 percent annually for the prior five years. The IEA projects this figure will nearly double to 945 TWh by 2030 a volume slightly exceeding Japan's entire annual electricity consumption today. The United States currently accounts for 45 percent of global data center electricity consumption, followed by China at 25 percent and Europe at 15 percent.

AI-specific workloads are the primary accelerant. Gartner's research director Linglan Wang noted in November 2025 that AI-optimized servers which rely on GPU-based parallel processing consuming up to six times more power than conventional racks are projected to account for 21 percent of total data center power use in 2025, rising to 44 percent by 2030. According to Gartner, the electricity use of AI-optimized servers alone is set to rise nearly fivefold, from 93 TWh in 2025 to 432 TWh in 2030. A Pew Research Center analysis published in October 2025 noted that a typical AI-focused hyperscale facility consumes as much electricity annually as 100,000 households, while the largest facilities currently under construction are expected to consume twenty times that amount.

The concentration of this load is creating acute regional grid stress. A peer-reviewed study published in April 2025 and co-authored by researchers at multiple institutions found that regions including Virginia, Oregon, and Ireland may experience Power Stress Index values exceeding 0.25, a threshold indicating significant local grid vulnerability. In 2023, data centers already consumed approximately 26 percent of total electricity supply in Virginia a figure that has almost certainly grown since.

The Investment Arms Race: A Balance Sheet Transformation

The financial scale of the AI infrastructure buildout is without historical parallel in the technology industry. According to first-quarter 2026 earnings data compiled by the Financial Times and reported by Tom's Hardware, Alphabet, Microsoft, Meta, and Amazon collectively plan to spend approximately $725 billion in capital expenditure in 2026 a 77 percent increase over their combined record spend of approximately $410 billion in 2025, and itself nearly triple their combined outlay in 2023. Roughly 75 percent of this aggregate spend approximately $450 billion is directly tied to AI infrastructure, according to an analysis by CreditSights.

The individual commitments are staggering. Amazon has guided to approximately $200 billion in 2026 capital expenditure. Alphabet has guided to as much as $190 billion, matching Microsoft's stated $190 billion figure though Microsoft's CFO Amy Hood attributed approximately $25 billion of that total to component price inflation in memory chips and processing hardware. Meta has raised its 2026 guidance to a range topping $145 billion, up from $64–72 billion spent in 2025. Oracle, at $50 billion, represents a 136 percent increase over its prior year.

The effect on corporate balance sheets has been significant and, for some companies, alarming. Pivotal Research projected that Alphabet's free cash flow would fall roughly 90 percent in 2026, to approximately $8.2 billion from $73.3 billion in 2025. Morgan Stanley analysts projected Amazon could post negative free cash flow of nearly $17 billion in 2026, a figure Bank of America placed as high as a $28 billion deficit. Amazon has disclosed in an SEC filing that it may need to raise additional equity and debt as its buildout continues. Alphabet's long-term debt quadrupled in 2025 to $46.5 billion, following a $25 billion bond sale in November of that year. The IEEE Communications Society's technology blog reported in late 2025 that big tech companies had collectively issued $100 billion in bonds in 2026 alone to fund AI capital expenditure, with investors demanding record protection via credit default swaps a development that signals credit markets are beginning to price the execution risk of this buildout.

Capital intensity has reached levels previously considered unthinkable in the technology sector. CreditSights estimated that capital expenditure as a share of revenue a metric known as capital intensity reached 57 percent at Oracle and 45 percent at Microsoft in the most recent quarter, with further increases expected through 2026. Meta's contractual commitments rose by $107 billion in a single quarter in early 2026, according to reporting by Om Malik, implying total forward commitments of approximately $190 billion. The company committed to nearly five years' worth of its pre-2024 annual capex in just ninety days.

Despite the pressures, the chief executives involved remain publicly committed. Amazon CEO Andy Jassy, in his 2026 annual letter to shareholders, described AI as "a once-in-a-lifetime opportunity where the current growth is unprecedented and the future growth even bigger," pledging the company would not be "conservative" in how it played the opportunity.

Grid Overhaul: A Crisis in Slow Motion

The speed of AI infrastructure deployment has outpaced the ability of electricity grids to accommodate it. Microsoft's CFO Amy Hood disclosed in early 2026 that the company has an $80 billion backlog of Azure orders it cannot fulfill due to power constraints a supply gap driven not by a shortage of servers or capital but by the inability to connect new data centers to the grid quickly enough. The IEA's 2025 update, Key Questions on Energy and AI, published in April 2026, found that constrained by slow grid connections, data center developers are advancing a large number of projects with onsite natural gas-based power generation, largely in the United States a workaround that trades carbon intensity for speed.

A Bloom Energy report published in January 2026 projected that U.S. data centers' total combined energy demand will nearly double between 2025 and 2028, jumping from 80 to 150 gigawatts the equivalent of adding a country with the energy needs of Spain within just three years. The pipeline of conditional offtake agreements between data center operators and small modular reactor projects has grown from 25 gigawatts at the end of 2024 to 45 gigawatts as of early 2026, according to the IEA.

The Nuclear Pivot

Faced with a baseload power requirement that intermittent renewables cannot reliably satisfy and a grid connection queue measured in years, the major technology companies have made a decisive pivot toward nuclear energy. The sequence of commitments since late 2024 has been rapid and consequential. Microsoft signed a 20-year, 837-megawatt power purchase agreement with Constellation Energy to restart Three Mile Island Unit 1 in Pennsylvania a facility that had been shut since 2019 targeting a 2028 return to service. Amazon expanded its nuclear offtake agreement with Talen Energy's Susquehanna Steam Electric Station in Pennsylvania to 1,920 megawatts through 2042. Google signed a 500-megawatt agreement with SMR developer Kairos Power the first corporate SMR fleet deal in the United States and in May 2025 committed early-stage capital to Elementl Power for three reactor sites totaling 1.8 gigawatts. Meta announced a 20-year power purchase agreement with Constellation Energy to buy 1.1 gigawatts from the Clinton Clean Energy Center in Illinois, and separately struck arrangements with Vistra that unlock the option for up to 6.6 gigawatts of nuclear capacity, including a potential new 300-megawatt SMR. Oracle is designing data centers intended to be powered directly by three small modular reactors, aiming for grid independence.

The scale of these nuclear commitments represents a fundamental reshaping of the energy industry's trajectory. The IEA noted in April 2026 that AI is now a major source of momentum for the nuclear and advanced geothermal industries a development few energy analysts predicted even three years ago.

The Pros and Cons of AI's Energy Dominance

The economic and social productivity gains plausibly attributable to AI are large. The IEA's Energy and AI report observed that power consumption per AI task is declining at a rate unprecedented in energy efficiency history, driven by continuous hardware improvements most recently in the NVIDIA H100 and Blackwell GPU families and software optimisation. More capable AI systems are enabling breakthroughs in drug discovery, materials science, logistics optimisation, and scientific research that could produce economic value orders of magnitude larger than the energy cost of the computation required. The IEA acknowledged that AI is emerging as a general-purpose technology, much like electricity itself.

However, the costs and risks are real and material. On the environmental dimension, a peer-reviewed study published in January 2025 projected that AI servers are expected to drive annual increases in U.S. water consumption of 200 to 300 billion gallons and add 24 to 44 million metric tons of CO2-equivalent emissions by 2030. The geographic concentration of data center demand is already producing local electricity price increases for residential and small commercial customers in regions like Northern Virginia and parts of Ireland. Consumer Reports, in a March 2026 investigation, noted that despite a White House-sponsored pledge by tech executives to limit the effect of data centers on residential electricity bills, many local residents and consumer advocates see mainly downsides to the expansion.

On the financial dimension, the enormous capex commitments are being made in advance of definitive evidence that AI revenues will grow sufficiently to justify them. The depreciation burden from GPU and server assets which the IEA notes are short-lived, typically three to five years is already compressing technology sector profit margins. Deutsche Bank analysts described Alphabet's infrastructure buildout as creating conditions warranting close scrutiny from fixed-income investors. The bond market's growing use of credit default swaps as protection against hyperscaler debt is a rare and notable signal of institutional caution toward what has otherwise been treated as an unambiguously positive investment cycle.

The broader grid question remains unresolved. While the IEA projects that data center electricity consumption will double to 945 TWh by 2030, the agency also notes that AI is accelerating investment in technologies nuclear, geothermal, advanced grid management that could ultimately expand the clean energy supply available to the entire economy. Whether the grid stress created by AI's power appetite proves to be a temporary constraint or a longer-term bottleneck will depend heavily on permitting reform, transmission investment, and the pace at which SMR and other advanced generation technologies can be brought to commercial scale. As of May 2026, the answer to that question remains genuinely uncertain and consequential for energy markets, corporate balance sheets, and electricity users worldwide.

When Flooding Pays: A New Financial Bet

Breakfast for Life How Local Diners and Hardware Stores are Outsmarting Amazon

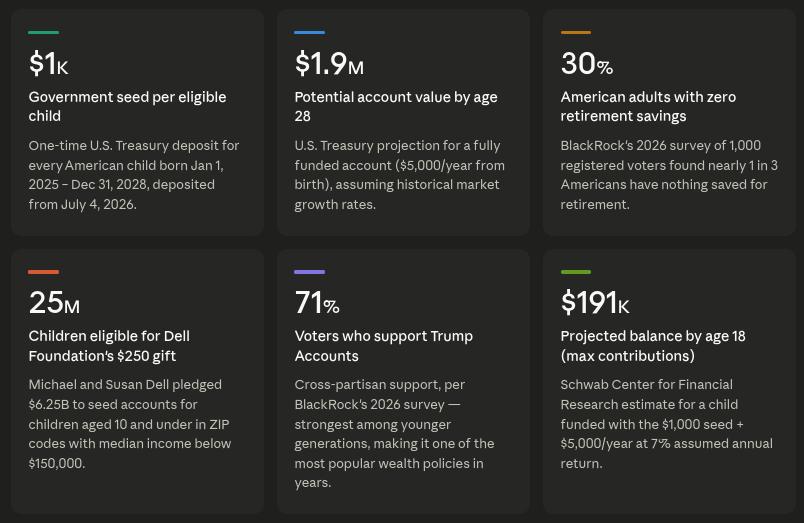

Before They Can Walk, They're Invested: How Trump Accounts Are Transforming Financial Culture

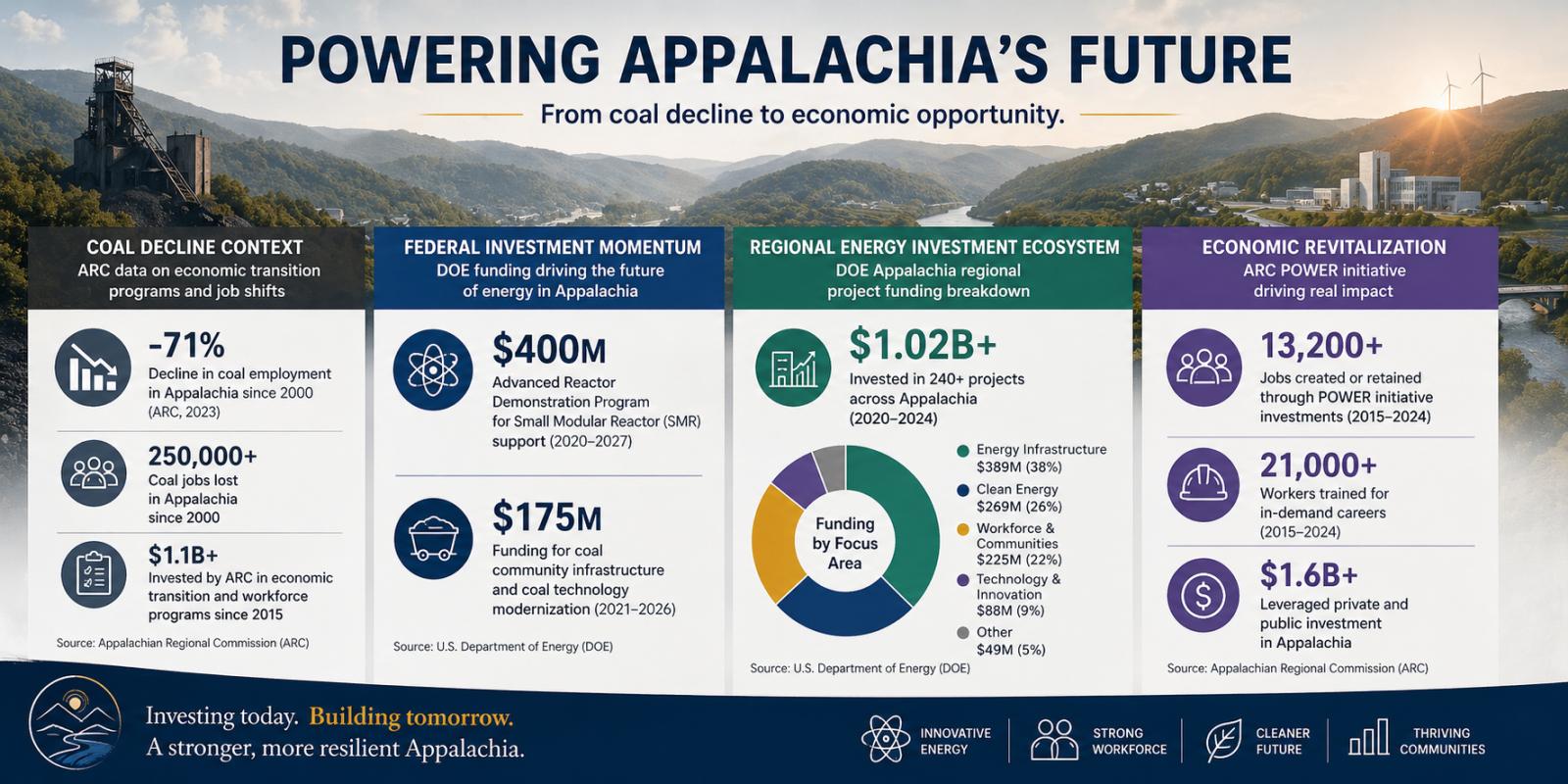

The Appalachian Energy Reboot: Inside the Unexpected Nuclear Startup Boom